In the ever-evolving landscape of cloud computing, AWS Lambda has emerged as a game-changer, enabling developers to build and deploy serverless applications with ease. With its robust features and scalable architecture, Lambda has become an indispensable tool for businesses seeking to optimize performance, reduce costs, and enhance productivity.

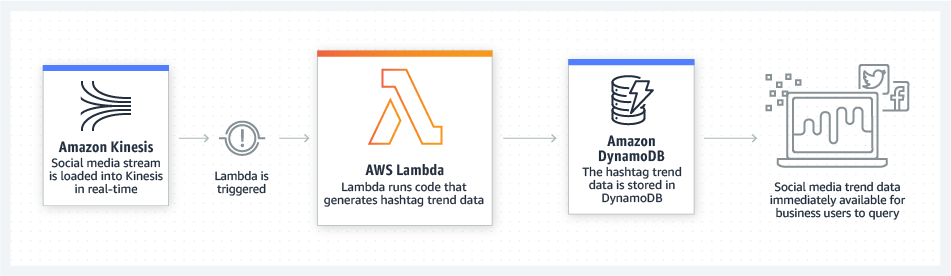

One of the key use cases for AWS Lambda revolves around calling DynamoDB, Amazon’s fully managed NoSQL database service. This powerful combination allows developers to create efficient, event-driven architectures by leveraging Lambda’s event-driven capabilities and DynamoDB’s seamless scalability. Whether it’s processing real-time data streams, automating data transformations, or executing complex business logic, AWS Lambda and DynamoDB provide a winning solution.

AWS Lambda pricing

When it comes to pricing, AWS Lambda offers a unique model that aligns with the pay-as-you-go philosophy. With Lambda, you pay only for the actual compute time consumed by your functions and the memory ressources you assigned for that time. This granular pricing structure ensures that you optimize costs by paying only for the execution time your code requires, making it an attractive choice for organizations of all sizes.

Another advantage of AWS Lambda is its extensive language support. Developers can choose from a wide range of programming languages to write their Lambda functions, including popular options like Python, JavaScript (Node.js), Java, and C#. This versatility empowers developers to work with the languages they are most comfortable with, increasing productivity and reducing the learning curve.

Lambda for Java developers

As a Java developer, you might be wondering how Java performs in comparison to GraalVM native image builds and Rust. Why did we choose GraalVM and Rust as benchmark competitors? Well, GraalVM offers the advantage of allowing you to continue using the Java language while compiling it into native code. On the other hand, Rust is a powerful language with its own syntax, known for its exceptionally low memory consumption and fast execution times.

In the context of AWS Lambda pricing and high-load use cases, the combination of memory consumption and execution time plays a crucial role. Lets assume you have a Lambda function with 256mb which runs 10 ms per invocation. You can reduce the costs by 50% if you manage to run your function within 5ms per invocation. But this is usually not the easiest way to achieve savings. On the other hand you can reduce the costs by 50% if you manage to run your function with 128mb at 10ms, which is usually the easier way to go.

By optimizing these factors, you can effectively control the costs associated with AWS Lambda. That’s why we’ve selected GraalVM and Rust as benchmark competitors, as they both have features that can potentially address the performance and cost considerations for such use cases.

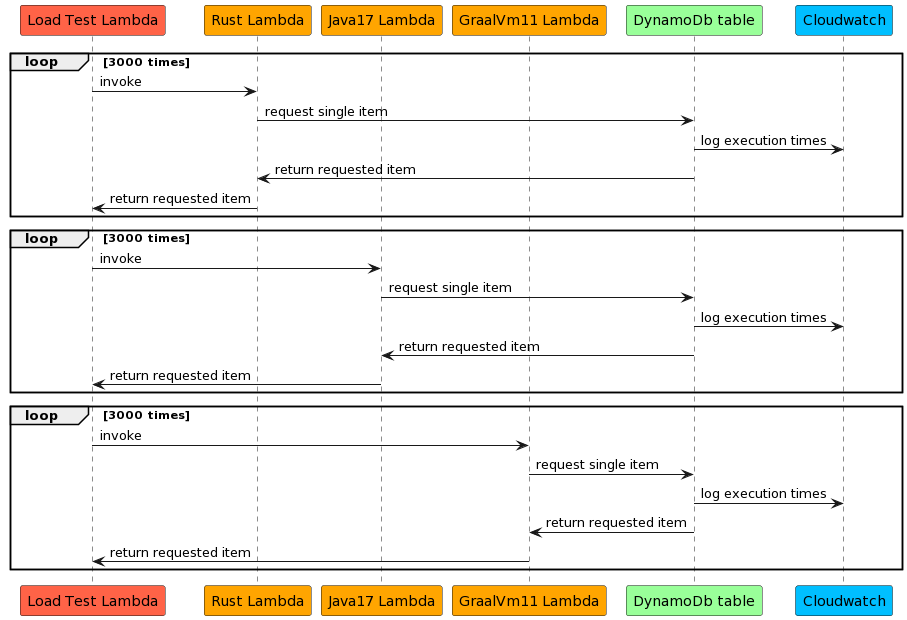

Loadtest architecture

We have developed Lambda functions in Rust, Java, and GraalVM that interact with a DynamoDB table. To conduct a load test, we have created a dedicated Lambda function named „Load Test Lambda“ that invokes each of these functions 3000 times. We leverage CloudWatch Insights to gather the execution times for each invocation, enabling us to analyze and compare the results effectively.

Cloudwatch Insights

This query was used to summarize the exeuction times for aws lambda:

filter @type = "REPORT"

| parse @log /\d+:\/aws\/lambda\/(?<function>.*)/

| stats

count(*) as calls,

avg(@duration+coalesce(@initDuration,0)) as avg_duration,

pct(@duration+coalesce(@initDuration,0), 0) as p0,

pct(@duration+coalesce(@initDuration,0), 25) as p25,

pct(@duration+coalesce(@initDuration,0), 50) as p50,

pct(@duration+coalesce(@initDuration,0), 75) as p75,

pct(@duration+coalesce(@initDuration,0), 90) as p90,

pct(@duration+coalesce(@initDuration,0), 95) as p95,

pct(@duration+coalesce(@initDuration,0), 100) as p100

group by function, ispresent(@initDuration) as coldstart

| sort by coldstart, functionBenchmark results

| lambda function name | cold start | calls | avg duration | p0 | p25 | p50 | p75 | p90 | p95 | p100 |

| rust-128mb | 0 | 2999 | 5.5713 | 3.09 | 3.8752 | 4.5086 | 6.1518 | 8.8049 | 10.1628 | 53.38 |

| rust-256mb | 0 | 2998 | 4.6524 | 3.05 | 3.7836 | 4.0649 | 4.5447 | 5.5024 | 7.3305 | 58.1 |

| graalvm-128mb | 0 | 2998 | 10.024 | 3.75 | 5.1292 | 8.3899 | 10.988 | 17.4128 | 23.5422 | 304.62 |

| graalvm-256mb | 0 | 2999 | 4.5232 | 2.98 | 3.6649 | 3.9689 | 4.4021 | 5.2455 | 7.1573 | 121.16 |

| graalvm-512mb | 0 | 2998 | 5.1829 | 3.48 | 4.2934 | 4.5766 | 5.0167 | 6.2986 | 8.1972 | 62.73 |

| graalvm-1024mb | 0 | 2998 | 5.5019 | 3.47 | 4.4021 | 4.6918 | 5.2875 | 6.8232 | 8.735 | 104.79 |

| java17-256mb | 0 | 2997 | 19.488 | 5.87 | 9.5259 | 14.857 | 25.0855 | 31.8294 | 47.3338 | 303.16 |

| java17-256mb-arm | 0 | 2997 | 19.5547 | 5.69 | 9.8332 | 14.1661 | 25.4869 | 31.3281 | 47.3338 | 367.87 |

| java17-512mb | 0 | 2998 | 9.5256 | 5.59 | 6.9883 | 7.751 | 9.1628 | 12.5023 | 20.9857 | 83.93 |

| java17-512mb-arm | 0 | 2998 | 9.0535 | 5.61 | 6.5567 | 7.1005 | 8.5287 | 12.014 | 20.006 | 153.5 |

| java17-1024mb | 0 | 2998 | 8.8418 | 5.4 | 6.9328 | 7.6895 | 9.0901 | 11.7302 | 14.6622 | 85.82 |

| java17-1024mb-arm | 0 | 2998 | 7.9314 | 4.98 | 6.6076 | 7.538 | 8.3292 | 9.6935 | 11.2365 | 55.55 |

| rust-128mb | 1 | 1 | 132.95 | 132.95 | 132.95 | 132.95 | 132.95 | 132.95 | 132.95 | 132.95 |

| rust-256mb | 1 | 2 | 164.165 | 119.62 | 119.62 | 119.62 | 208.71 | 208.71 | 208.71 | 208.71 |

| graalvm-128mb | 1 | 2 | 772.58 | 746.67 | 746.67 | 746.67 | 798.49 | 798.49 | 798.49 | 798.49 |

| graalvm-256mb | 1 | 1 | 531.75 | 531.75 | 531.75 | 531.75 | 531.75 | 531.75 | 531.75 | 531.75 |

| graalvm-512mb | 1 | 2 | 544.09 | 542.67 | 542.67 | 542.67 | 545.51 | 545.51 | 545.51 | 545.51 |

| graalvm-1024mb | 1 | 2 | 578.57 | 577.04 | 577.04 | 577.04 | 580.1 | 580.1 | 580.1 | 580.1 |

| java17-256mb | 1 | 3 | 5887.873 | 5851.69 | 5851.69 | 5863.74 | 5948.19 | 5948.19 | 5948.19 | 5948.19 |

| java17-256mb-arm | 1 | 3 | 5902.303 | 5670.59 | 5670.59 | 5920.52 | 6115.8 | 6115.8 | 6115.8 | 6115.8 |

| java17-512mb | 1 | 2 | 3706.48 | 3678.29 | 3678.29 | 3678.29 | 3734.67 | 3734.67 | 3734.67 | 3734.67 |

| java17-512mb-arm | 1 | 2 | 3658.12 | 3632.51 | 3632.51 | 3632.51 | 3683.73 | 3683.73 | 3683.73 | 3683.73 |

| java17-1024mb | 1 | 2 | 2388.255 | 2372.76 | 2372.76 | 2372.76 | 2403.75 | 2403.75 | 2403.75 | 2403.75 |

| java17-1024mb-arm | 1 | 2 | 2492.53 | 2442.05 | 2442.05 | 2442.05 | 2543.01 | 2543.01 | 2543.01 | 2543.01 |

Compare cold start times

The result table includes a column called „coldstart,“ where a value of 1 indicates a cold start. In general, Java is recognized for its higher memory usage and slower startup times. AWS has introduced several measures to mitigate these concerns, including the snapstart feature [1]. However, for our specific test case, we opted to compare raw startup times without applying this optimization.

When observing the startup times, it is evident that Java’s performance is influenced by the allocated memory. The more memory and indirect CPU power provided, the faster the startup time. For instance, at 256MB, Rust demonstrates an impressive startup time of approximately 0.1 seconds, whereas Java takes around 5.8 seconds. GraalVM falls in between at 0.5 seconds, which is generally deemed acceptable for most use cases.

In certain asynchronous use cases, a startup time of 5.8 seconds might still be acceptable. However, if you choose to proceed with Java, it is recommended to explore the snapstart feature mentioned earlier to optimize your startup times.

[1] For more information about the snapstart feature, refer to: https://docs.aws.amazon.com/lambda/latest/dg/snapstart.html

Compare hot lambda performance

With a memory allocation of 256MB, Java exhibits an average execution time of approximately 19.5 ms, while GraalVM boasts a significantly faster performance at 4.5 ms. Consequently, using Java in comparison to GraalVM can lead to lambda execution costs up to four times higher.

Introducing Rust into the equation, we observe that even with a memory allocation of 128MB, Rust performs admirably with an average execution time of 5.8 ms. On the other hand, GraalVM struggles with memory usage, resulting in slower and more unstable response times, particularly with p100 at 304.6 ms. Our comprehensive results indicate that when comparing Rust to GraalVM, cost reductions of up to 50% can be achieved, depending on the specific use case.

Arm64 vs x86 runtime architecture

Our benchmark results indicate a significant improvement in execution times, up to 10% faster, for Java runtimes on the arm64 architecture compared to x86. This translates to a superior price-performance ratio for arm64 architecture. In line with these findings, an AWS blog post [1] also confirms the enhanced price-performance benefits of arm64 architecture over x86, with the arm64 runtime offering lower per-hour costs.

Considering that Java artifacts can run on both arm64 and x86 architectures using the same artifact, we generally recommend selecting arm64 as the preferred Lambda execution architecture for Java runtimes. By leveraging the advantages of arm64 architecture, you can maximize the performance and cost efficiency of your Java-based Lambda functions.

[1] For further details, refer to: https://aws.amazon.com/de/blogs/aws/aws-lambda-functions-powered-by-aws-graviton2-processor-run-your-functions-on-arm-and-get-up-to-34-better-price-performance/

Conclusion

As a Java developer, when it comes to low usage use cases, Java remains a straightforward and convenient choice. However, it is advisable to minimize dependencies on external libraries whenever possible to optimize performance and minimize costs.

On the other hand, for high load use cases, our benchmark results indicate that Rust surpasses other options in terms of both performance and costs. Rust offers exceptional performance while ensuring cost-effectiveness, making it a compelling choice for resource-intensive scenarios.

In the middle ground, GraalVM with native builds presents itself as an alternative with certain trade-offs. It allows you to continue writing Java code and leverage your existing expertise. By adopting GraalVM, you can potentially reduce costs by up to 75% compared to traditional Java implementations. However, it’s important to note that building Docker images for GraalVM native functions can be more complex, and there may be limitations, such as restrictions on reflection usage. Additionally, if you prefer using the arm64 architecture instead of x86, you will need to compile native artifacts, whereas Java allows the use of the same artifact across different architectures.

Furthermore, it’s worth mentioning that Java still enjoys broader support, including Maven archetypes and extensive documentation, which can be advantageous when it comes to development workflows and community resources.

In summary, your choice of language for AWS Lambda depends on the specific requirements of your use case. For low usage scenarios, Java remains a convenient option, while Rust excels in high load situations where performance and cost efficiency are paramount. GraalVM offers a middle ground with potential cost savings, but it comes with complexities and certain limitations. Consider your project’s needs and trade-offs carefully to make an informed decision.