1. Introduction

The Next Big Thing in Software Development – that is how Gradle advertises a concept named Developers Productivity Engineering (DPE).

What is it actually? It is a set of practices used to enhance productivity and developer happiness. What do we mean with developers happiness one may ask. Aren’t the devs happy? Most of you probably experienced those fights with strange build errors – those can cause some real frustration. Same as 20 minutes or more rebuild times after introducing some small changes. Irritation/frustration is one thing and the other thing is switching context in such cases, losing focus (eg. the build takes so long – let’s do something else during that time), etc. leading to decreased productivity (going back to the previous topic needs recalling what was it about…).

So, we may safely say that the DPE is about improving productivity and on the way bringing back joy to the devs work. 🙂

All this made us, the QA and dev team behind the product https://cm.consol.de curious and we decided to take a closer look.

2. Key topics of the DPE

How does the DPE try to solve the productivity/joy problems?

Key topics are:

- shorter build times and make the test cycles faster – so that small change in a code does not require 20 minutes or more rebuild time

- easier troubleshooting of build failures – (not talking about obvious errors reported by the compilers) providing env details like java versios, os, memory settings, dependencies problems, etc.

- getting rid of unstable (flaky) tests – detecting and handling tests witch may produce false alarms randomly

- general build improvements based on good observability of the builds

A little disclaimer here:

It is the Gradle company which is the leading company actively supporting the DPE concept. It also happens that it is Gradle which is providing tools to use and solve some of the mentioned problems. Unfortunately most of the tools are only available in the Gradle Enterprise (GE) system which is not free. Getting a test version of the GE is also not possible. Hence, instead of describing all the neat features of the GE which we didn’t have chance to explore in context of Consol CM, we recommend just watching the demo at https://www.youtube.com/watch?v=4ARx80ns6XI Additionally you may want to play with the GE yourself using the publicly available instances at https://gradle.com/enterprise-customers/oss-projects/

So later in this blog we will focus on the DPE elements which are available free of charge.

3. Shortening the build times and test cycles

How to do it? The ideas are:

- introduce build cache (we will go back to in details in a moment)

- distribute tests execution: run them in parallel on several nodes. Something similar is already done on our Jenkins, but here you would have the possibility to run all of your _local_ tests in a distributed way. That option is so far only present in the GE.

- run only necessary tests – basing on the changes done. That is also the GE module called Predictive Tests Selection – an AI model trained to select what tests to run.

So looks like in this part we can only experiment with the build cache.

What is it actually? It’s a mechanism allowing only partial rebuilds of a project and so speeds up the whole building process.

How does it work: given module of a project is probed during build time – that module and its dependencies are called “project inputs”. First if there is no build cache yet, the build output is put to the cache storage together with the combined inputs checksum. Another build attempt will first calculate checksum for the same set of project inputs and check if such checksum is already present in the cache – if so it means that there is no need to rebuild that module (and no need to run tests of it) and further processing is skipped. If there is no such checksum – we need to rebuild the module and its dependencies.

Let’s get our hands on it.

3.1. Build cache configuration

Make sure you use latest Maven and Gradle or the tools will complain, f.e. Maven:

The initial configuration is quite simple for both Maven and Gradle.

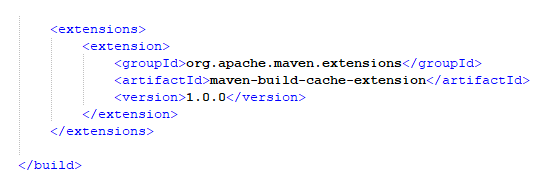

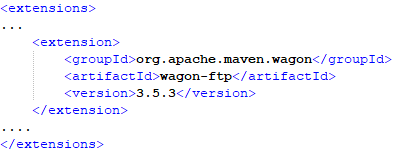

In Maven: use main pom.xml and the <extensions> element of <build>:

And that is enough for the cache to start working.

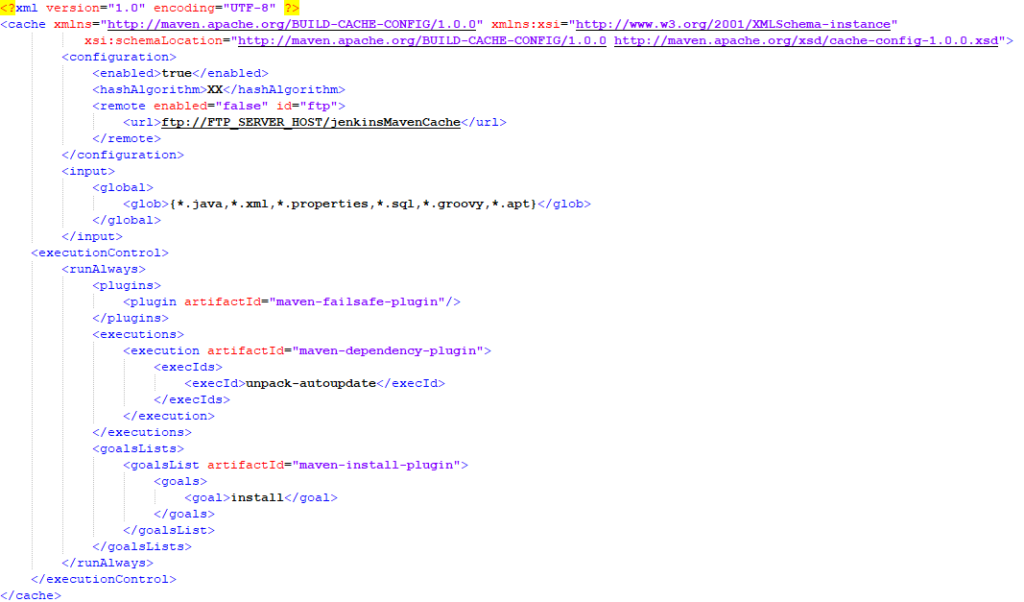

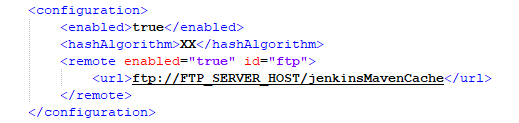

Advanced config can be done in a dedicated file PROJECT\.mvn\maven-build-cache-config.xml

Here an example config we use in our Consol CM ‘core’ project:

The picture above shows that we:

- have the cache enabled

- use XX algorithm for the checksum calculation

- remote cache is configured to use FTP but it is disabled by default (we enable it for Jenkins builds only)

- the “project inputs” are only files with selected extensions (*.java, *.groovy, etc.)

- and we want to always run “install” goal – even if cache entry was found and though nothing was rebuilt

See what might be configured at https://maven.apache.org/extensions/maven-build-cache-extension/build-cache-config.html

For Gradle the initial config is even simpler:

Either just use “–build-cache” command line parameter when building or put org.gradle.caching=true in the gradle.properties

Advanced configuration can be similarly done via gradle|settings.properties.

See what else could be configured at https://docs.gradle.org/current/userguide/build_cache.html

3.2. Cache in practice

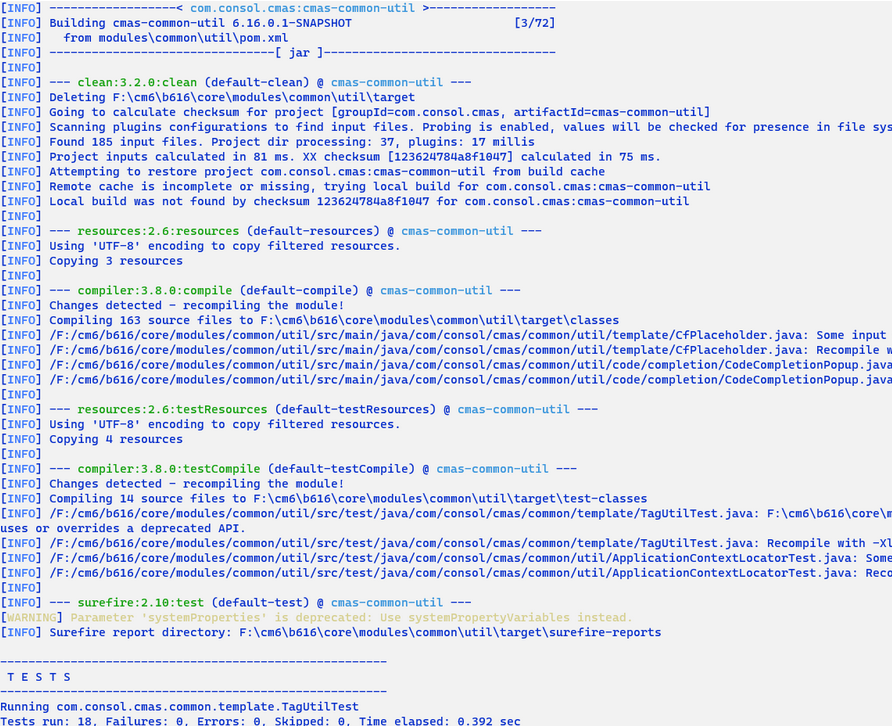

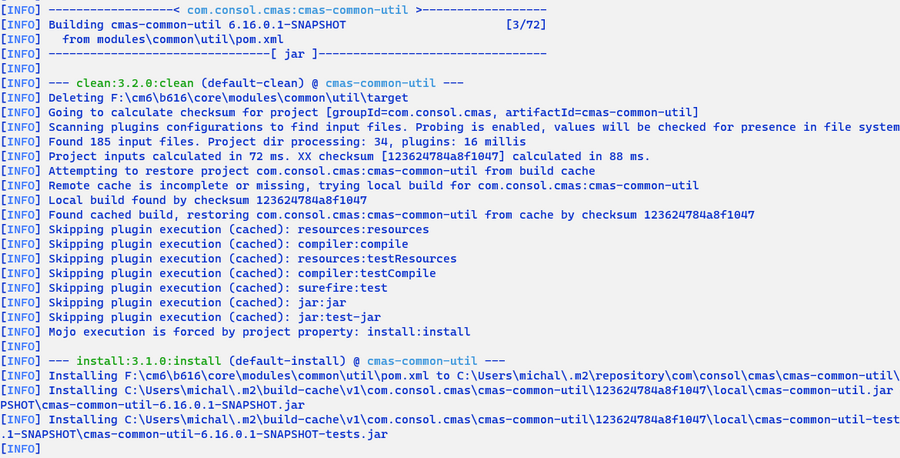

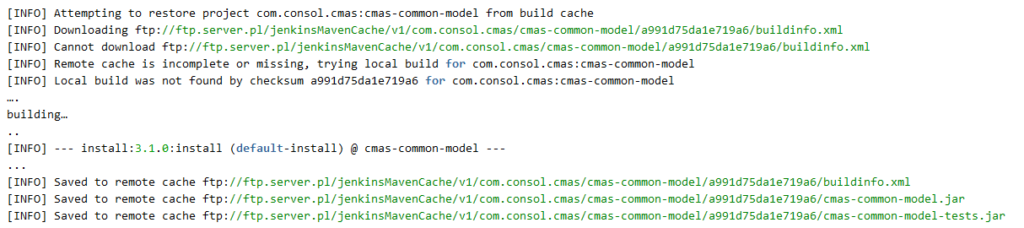

Here is some sample build output when the cache is configured.

In case no cache is present yet:

Note that the cache entry for calculated checksum was not found so the whole compilation and tests take place.

Another run of the build:

Now the build products are found and restored from cache – no rebuilding or retesting is executed.

“install” takes place in both cases as explicitly configured.

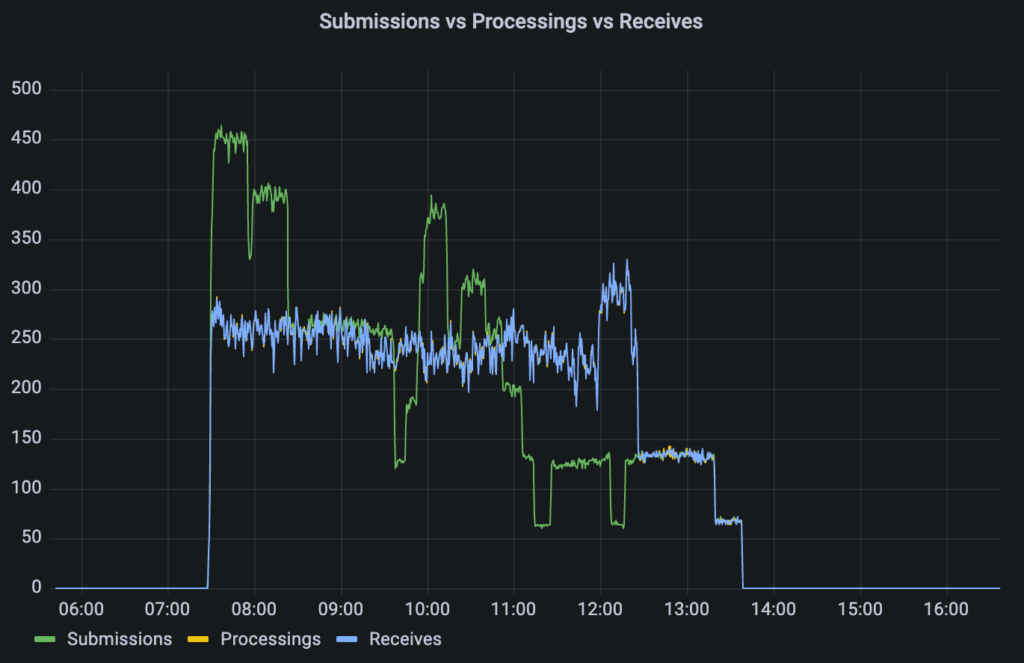

3.3. Sample results

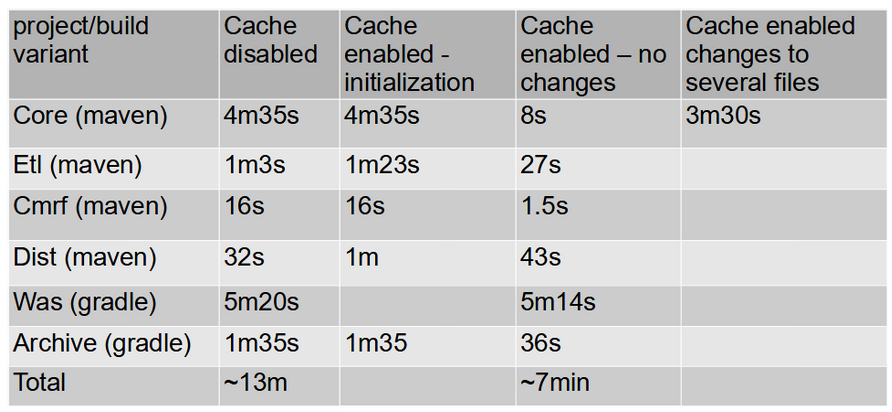

After some initial hassle (mentioned outdated Maven and Gradle, some memory issues, not yet perfect documentation – the first version of the Maven build cache extension had just been released by the time of this research) we finally saw tremendous improvement in the build time. We tried several of our modules and saw most significant gains in our biggest (from number of java classes pov) project – Consol CM “core”:

4.5m -> 8s – that is something! 🙂

Of course when there are some code changes and there is a need to rebuild part of the system the times look worse.

Also the improvements are not always visible – basing on given project’s characteristics. In the Consol CM “dist” we even saw a decrease of the performance. That is due to the fact the project is actually a packing or assembling project. There is not much of actual code compilation but rather work on bigger files (like cm6 ear and distribution zips). And calculation checksum/hash of such files takes significant amount of time.

3.4. Remote cache variant

It’s great to have a local maven cache. If you have a multi-module project results are really impressive.

But it would be even better to just download most recent cache builds and do not have to generate it locally . Maven build cache extension contains such a possibility .

https://maven.apache.org/extensions/maven-build-cache-extension/remote-cache.html

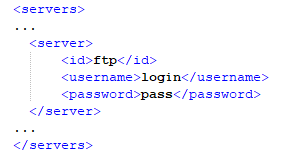

- Sample remote configuration:

For our tests we used local ftp server , but ssh,http any other protocol supported by maven wagon plugin can be used. For ftp use case two additional steps needs to be done.

- ftp wagon extension in parent pom.xml.

- server section in maven settings.xml file ( where id tag equals id in cache remote section)

To fill up ftp server with data, save to remote parameter needs to be added.

Full maven command:

mvn clean install-Dmaven.build.cache.remote.save.enabled=true |

Sample output:

3.4.1. Results

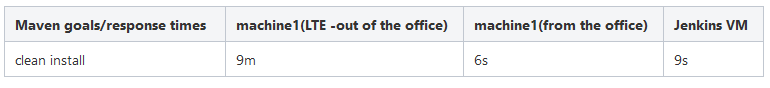

Building one of our project when cache is already available in the remote server.

Cache size ~60MB

3.4.2. Summary

As you can see from the results table , remote cache is great when network variable could be omitted.

For such a use case there is almost no time difference between local build when cache is already present and downloading all cache from remote repository.

When network is important factor then using remote cache is not the best idea, execution time could be much bigger than building project without cache .

Platform dependency is another disadvantage of current cache implementation. Generated cache hashes could be different in terms of used Platform( Linux,Windows..)

4. Easier troubleshooting

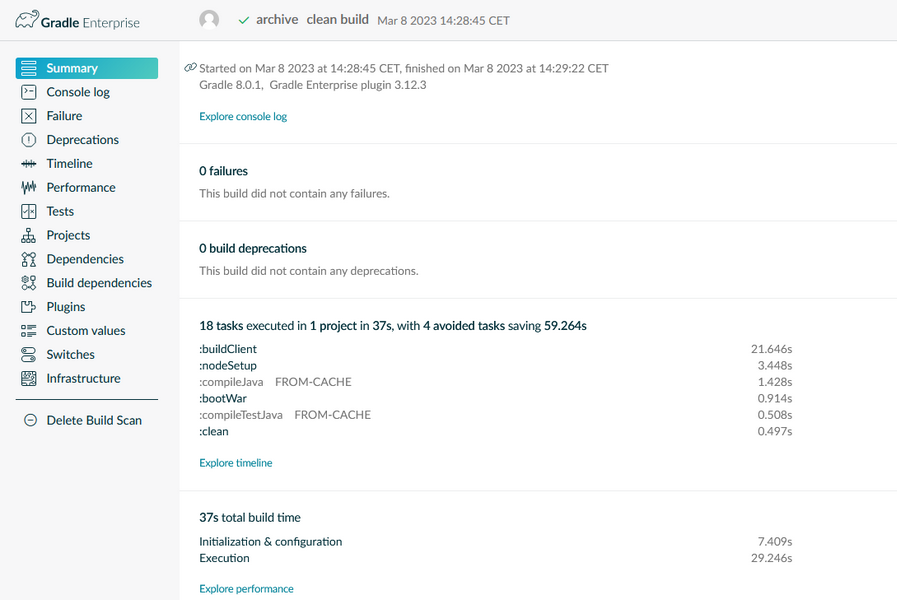

How to achieve that? Builds scans (TM) to the rescue.

Gradle made part of its Gradle Enterprise available freely. Those are the basic Build scans (TM). The drawback of the free version is that you need to generate such scan manually and send it to Gradle’s cloud – which may be also a security issue.

But what is it? By definition (https://scans.gradle.com/) “A Build Scan™ is a shareable record of a build that provides insights into what happened and why. You can create a Build Scan at scans.gradle.com for the Gradle and Maven build tools for free.”

We’ve got some scans of our projects “CM Archive” and “WAS”: https://scans.gradle.com/s/rsog7vun57cbw, https://scans.gradle.com/s/orshkwanfmevu

Feel free to explore them.

You can see the summary of a build:

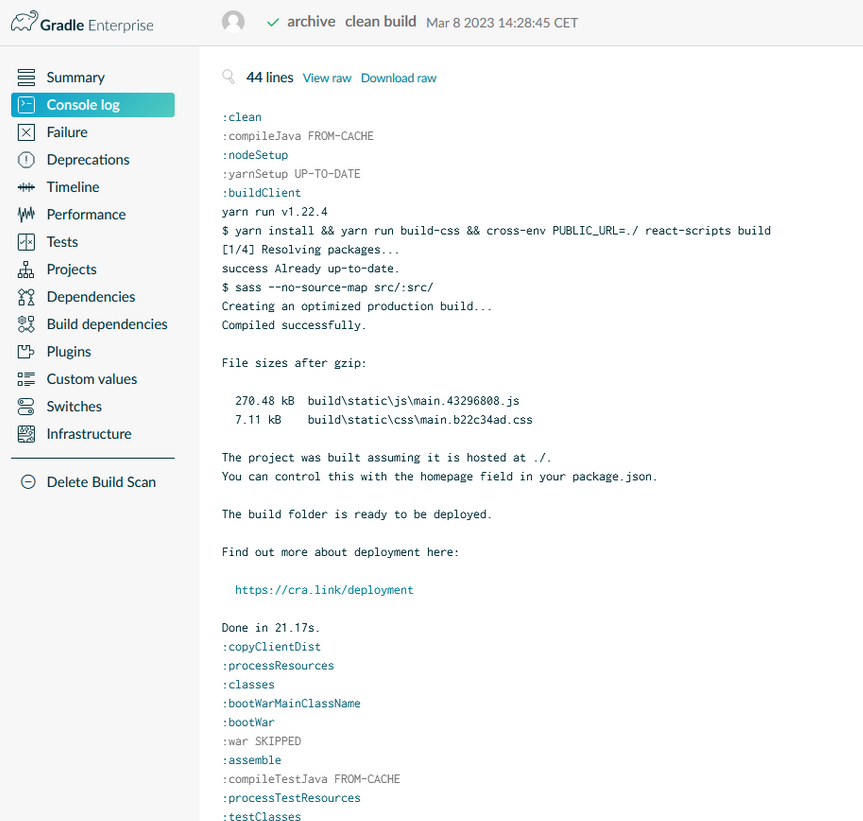

and we can go into details – see how the build console looked like:

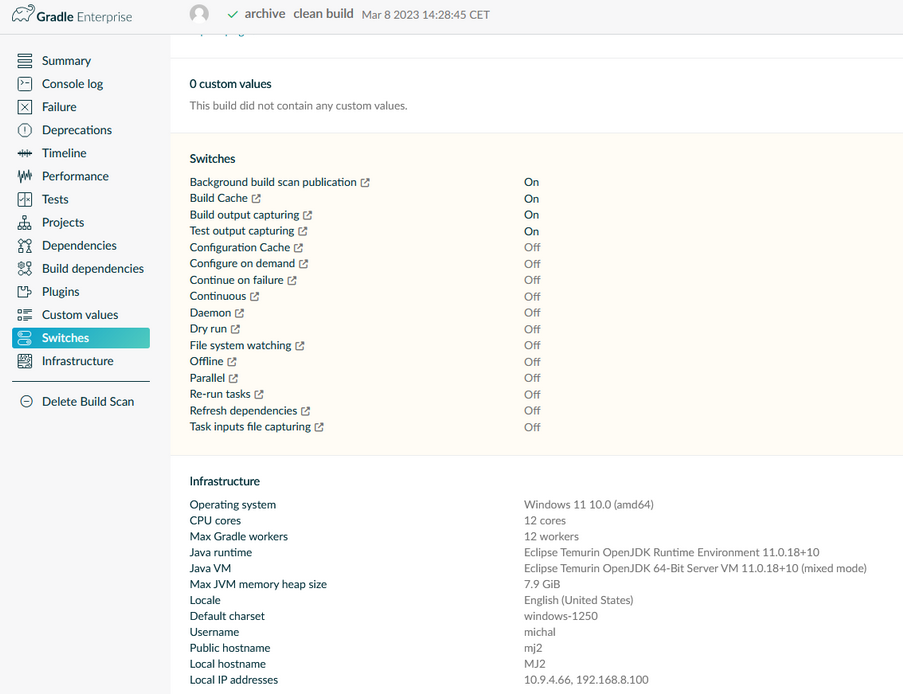

Which options were switched on, what were the build environment details:

Other details like build times of specific tasks, how much time was saved due to build cache, etc.

How cool is that? 🙂

Now imagine you also have the possibility to compare such build records to detect differences which may led to build failure or drill down to even more details – and you have the Gradle Enterprise version of that feature 🙂

5. Summary

Gradle states that there is an increasing need for DPE specialists. New full-time job offers are issued for them daily. And we can agree with that by looking at how big the concept is and how powerful the GE system be.

If we would really like to make proper usage of it we would probably need someone looking at it frequently. There are lots of insights, measures to look at and tweak things. And the build process being a … process so constantly changing – would require such monitoring. It is a pity we could not get our hands on the GE system in context of Consol CM – just for tests. Though those publicly available instances provide general idea what is it about.

Regarding the build cache – it is worth mentioning once more that it may not always be the cure – depends on the project. That also may change with new versions – as said earlier the extensions are quite new (Maven – 1.0 release in the beginning of 2023). And a nice side effect of using them is being forced to be up to date with the build tools versions 🙂